We have an application on our site that was rewritten a few years back by a developer who is no longer with the company. He attempted to do some “smart” caching things to make it fast, but I think had a fundamental lack of understanding of how caching, or at least memcached works.

Memcached is a really nifty, stable, memory-based key-value store. The most common use for it is caching the results of expensive operations. Let’s say you have some data you pull from a database that doesn’t change frequently. You’d cache it in memcached for some period of time so that you don’t have to hit the database frequently.

A couple of things to note about memcached. Most folks run it on a number of boxes on the network, so you still have to go across the network to get the data. [1] Memcached also, by default, has a 1MB limit on the objects/data you store in it. [2] Store lots of stuff in it, keep it in smaller objects (that you don’t mind throwing across the network), and you’ll see a pretty nice performance boost.

Unless … someone decides to not cache little things. And instead caches a big thing.

We started to notice some degradation in performance over the past few months. It finally got bad enough that I had to take a look. It only took a little big of debugging to determine that the way the caching was implemented wasn’t helping us: it was actively hurting us. Rather than caching entries individually, it was loading up an entire set of the data and trying to cache a massive chunk of data. Which, since it was larger than the 1MB limit, would fail.

You’d end up with something like this:

- Hey, do I have this item in the cache?

- Nope, let’s generate the giant object so we can cache it

- Send it to the server to cache it

- Nope, it’s too big, can’t cache it

- Oh well, onto the next item … do I have it in the cache?

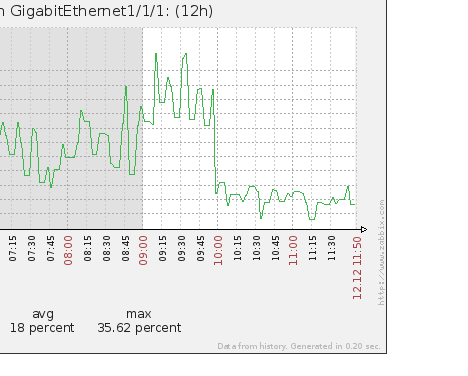

Turns out, this wasn’t just impacting performance. It was hammering our network.

The top of that graph is about 400Mb/s. The drop off is when we rolled out the change to fix the caching (to cache individual elements rather than the entire object0. It was, nearly instantaneously, a 250Mb/s drop in network traffic.

The lesson here? Know how to use your cache layer.